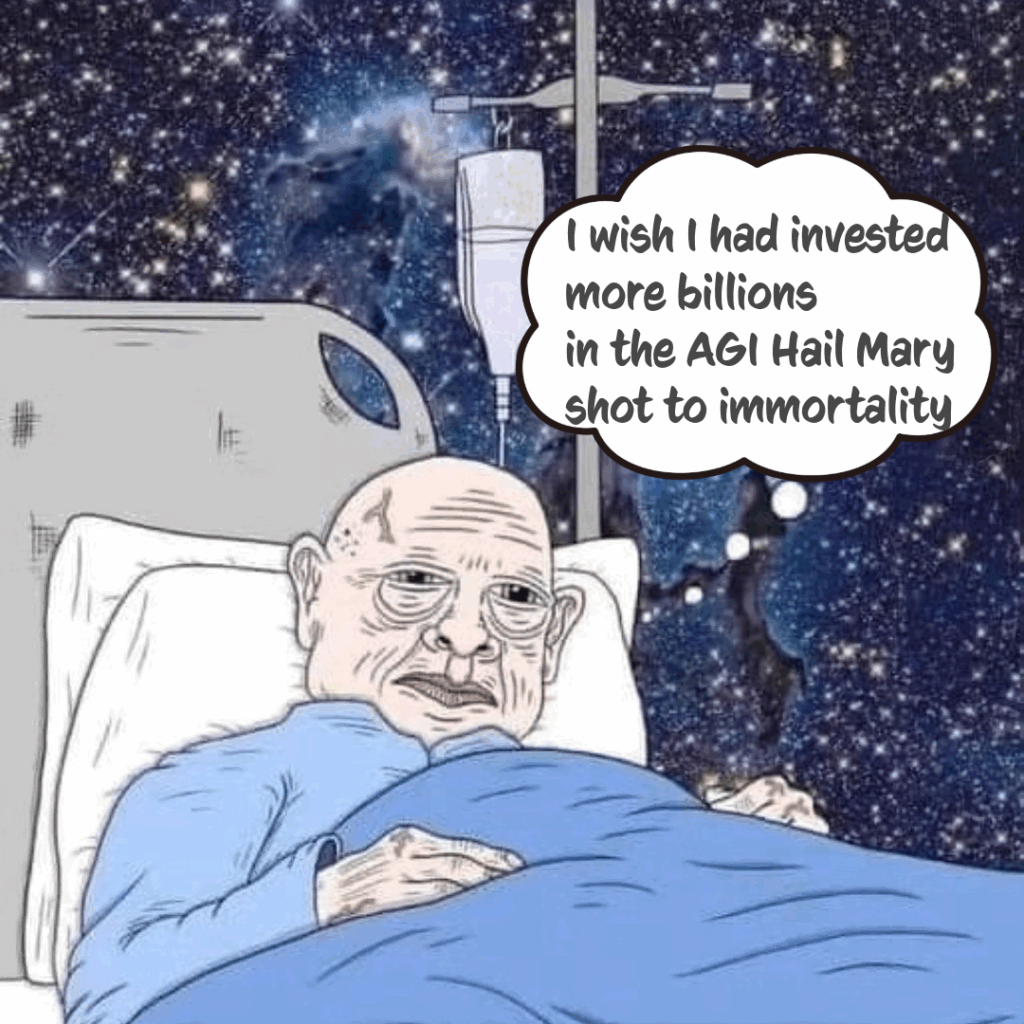

Al Alignment, which we cannot define, will be solved by rules on which none of us agree, based on values that exist in conflict, for a future technology that we do not know how to build, which we could never fully understand, must be provably perfect to prevent unpredictable and untestable scenarios for failure, of a machine whose entire purpose is to outsmart all of us and think of all possibilities that we did not.