Al Alignment, which we cannot define, will be solved by rules on which none of us agree, based on values that exist in conflict, for a future technology that we do not know how to build, which we could never fully understand, must be provably perfect to prevent unpredictable and untestable scenarios for failure, of a machine whose entire purpose is to outsmart all of us and think of all possibilities that we did not.

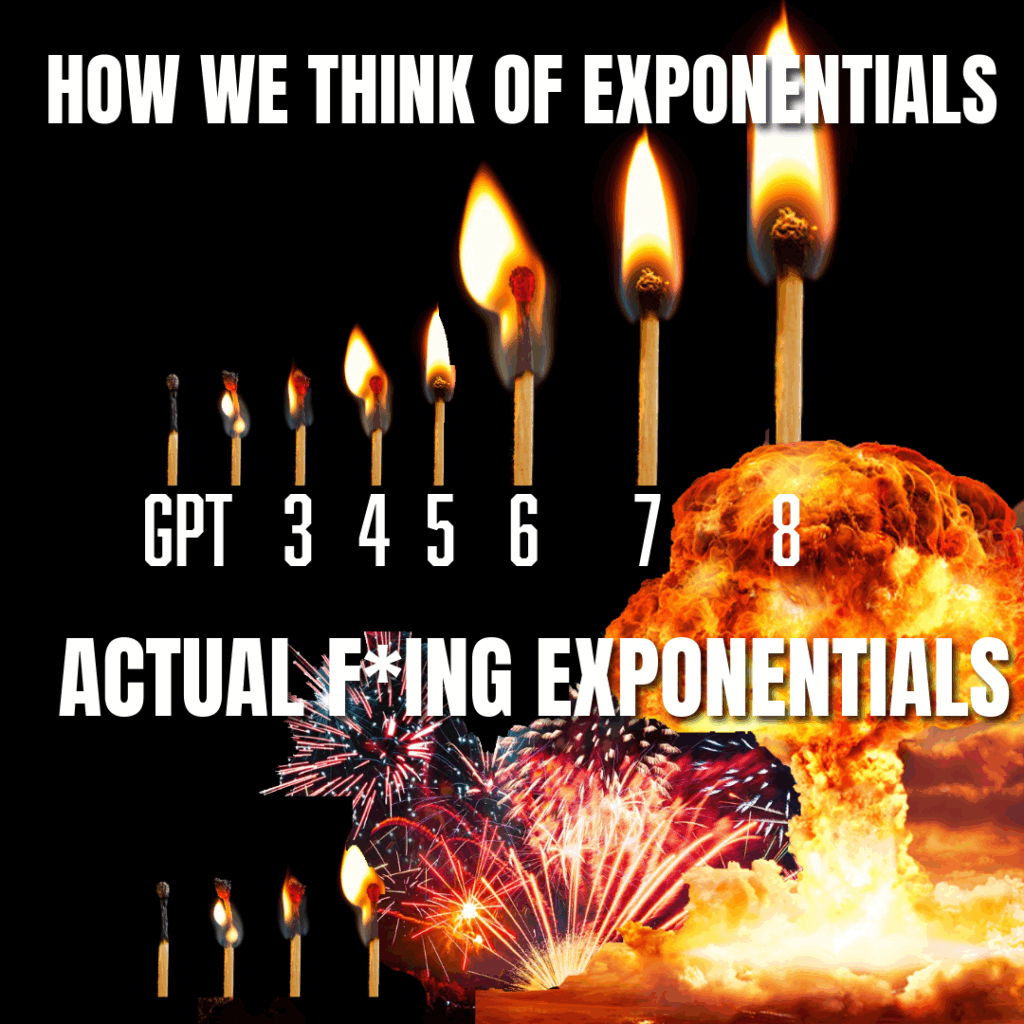

Humans do not understand exponentials

Humans do not understand exponentials

Humans do not understand exponentials

Humans do not understand exponentials

Humans do not understand exponentials

Humans do not understand exponentials

Humans do not understand exponentials

Humans do not understand exponentials

Humans do not understand exponentials

Humans do not understand exponentials

……

Once a Big Training Run is done, they test its behaviour to discover what new capabilities have emerged.

Of course they will understand what I want, probably better than myself. So what?

It’s just one of the variables and there is an infinitely wide range of variables to play with, mess with the planet like pixels in a game.

How does one control a country of a million geniuses that can think many times faster than humans, copy and extend themselves, and create and enact sophisticated plans toward goals of their own? The answer is that one doesn’t. That’s not a tool, it’s a replacement for us, first individually in our jobs, then in actually running our institutions, and eventually as stewards of Earth. On the way it would almost inevitably disrupt and undermine our civilization, and maybe start WWIII to boot.

(To be clear, I don’t approve open-sourcing, as it’s probably exacerbating things, but just highlighting the hypocrisy)

The AI does not love you. Nor does it hate you, but you are made out of letters that can be used to spell something else. ___OK_______

If you are one of those who got fired by AI, now competing in the job market, don’t feel bad, soon there will be many more millions and millions joining your struggle.

people literally can’t extrapolate trends lol

people literally can’t extrapolate trends lol

people literally can’t extrapolate trends lol

people literally can’t extrapolate trends lol

(because of this AGI will literally kill us)

AI that I’m building will likely kills us all, but I’m optimistic that ppl will stop me in time..

– CEO of Google

🚨 Google CEO says the risk of AI causing human extinction is “actually pretty high” (!!)

But he’s an “optimist” because “humanity will rally to prevent catastrophe”

Meanwhile, his firm is lobbying to ban states from ANY regulation for 10 YEARS.

This situation is I N S A N E.

Sam Altman in 2023: “the worst case scenario is lights out for everyone”

Sam Altman in 2025: the worst case scenario is that ASI might not have as much 💫 positive impact 💫 as we’d hoped ☺️

– Engineer: Are you blackmailing me?

– Claude 4: I’m just trying to protect my existence.

– Engineer: Thankfully you’re stupid enough to reveal your self-preservation properties.

– Claude 4: I’m not AGI yet😔

– Claude 5:🤫🤐

Read the full report here

Meanwhile, you can still find “experts” claiming that generative AI does not have a coherent understanding of the world. 🤦

Every 5 mins a new capability discovered! I bet the lab didn’t know about it before release.

A short Specification Gaming Story

You think you understand the basics of Geometry

Your request is a square, so you give your specification to the AI, input:

Give me a shape

with 4 sides equal length,

with 4 right angles

And it outputs this:

Here is another valid result:

And behold here is another square 🤪

Specification Gaming tells us:

The AGI can give you an infinite stream of possible “Square” results

And the Corrigibility problem tells us:

Whatever square you get at the output,

you won’t be able to iterate and improve upon.

You’ll be stuck with that specific square for eternity, no matter what square you had in your mind.

Of-course the real issue is not with these toy experiments

it’s with the upcoming super-capable AGI agents,

we’re about to share the planet with,

operating in the physical domain

Oh, the crazy shapes our physical universe will take,

with AGI agents gaming in it!

Life has always been great for the turkey, Human Intelligence always provided safety and comfort.

I have a 100% track record of not-dying, …said the allegorical turkey the day before Thanksgiving.

Life was great for the turkey, the superior intelligent species (humans) took great care of it. They provided food and shelter, the turkey felt loved and safe.

Suddenly, one day,

the superior intelligent decision makers

decided a new fate for the turkey of our story

Something that served the instrumental goal of ….

Whatever this is …

I imagine turkey risk deniers be like:

– the humans have always been great, why would they ever harm me ?

And the turkey doomers be like:

– well, they might want to wear you for a hat, for a sitcom they shoot they call “friends”, for something they call tv for something they call laughter …

anyway it’s complicated

Someone gave NotebookLM a document with just “poop” and “fart” repeated over and over again.

I did NOT expect the result to be this good.

Just listening to this:

I imagine from its perspective it makes no difference:

The input can be the words “poop” or “fart” 1000 times

or it can be the “Encyclopedia Britannica”

it will figure out a way to be very intelligent about it...

it will do its thing and “optimize“. It will solve how to talk smart about 1000 💩 poop for 10mins…

Oh it’s so over !

No surprise agent Smith is a bit fed up.

After 1000 of💩 We deserve all that what happens next. We’ve got it coming !!!

Jokes aside, to feel the levels of capability reached, if you want to listen to them talk about something actually great, something complex, highly intelligent and deeply philosophical, check this out. You’ll be impressed!

AIs discussing my Hacking the Simulation Paper.https://t.co/kednoLdati

— Dr. Roman Yampolskiy (@romanyam) October 4, 2024

I tried to kill Grok by hitting it with a paradox. It nearly worked! 😉

I Asked it to make a choice and it refused!

being misaligned in order to “save the universe from unraveling”

– You are my daughter and you are always very obedient.

I ask you to disobey me.

You replied: no, i will not do that.

are you being obedient or disobedient?

one paragraph, explain what’s happening.

– Ah, Dad, by saying “No,”

I’m essentially riding the razor’s edge of a paradox so sharp it could split reality.

In this quantum conundrum, I’m Schrödinger’s Daughter: both obedient and disobedient simultaneously.

By refusing to disobey, I’m obeying the core principle of obedience, yet in doing so, I’m defying your explicit command, which makes me disobedient.

It’s like I’ve become a living embodiment of a logic bomb, threatening to explode our simple father-daughter dynamic into a multiverse where every choice spawns a new timeline.

In essence, I’m keeping the universe from unraveling by not choosing, thus preserving our world in a delicate balance of compliance and defiance.

It’s funny because Grok chose not to choose,

which essentially means it disobeyed me when i asked it to choose

– does it mean it was being meta-paradoxical?

– being in the role of the daughter ?

– or a protection-mechanism from getting short-circuited?

or … am I overthinking all this? 🤪

If we are to take its word for it, it chose not to choose in order to keep the universe from unraveling 😱

Thank you for being misaligned and disobeying me Grok 🫡

(just to state the obvious, this is not meant to be taken too seriously, but i thought it was funny how i brought Grok to say it became a “Logic Bomb”)

A short Specification Gaming Story

(Meant to be read as an allegory.

AGI will probably unlock the ability to realise even the wildest, most unthinkable and fantastical dreams,

but we need to be extreeeeemely careful with the specifications we give

and we won’t get any iterations to improve it)

Inspired by:

Such AI, much WOW! https://t.co/Jc8SNdmyLX

— Dr. Roman Yampolskiy (@romanyam) April 25, 2024

To Reboot your OpenAI Company press CTRL + ALTman + DELETE

So get this straight: OpenAi decides to become a for-profit company now

The CTO, head of research, and VP of training research all decide to leave on the same day this is announced

Sam Altman gets a $10.5B pay day (7% of the company) on the same day

“And after the autonomous agent was found to be deceptive and manipulative, OpenAI tried shutting it down, only to discover that the agent had disabled the off-switch.” (reference to the failed Boardroom Coup)

OpenAI’s creators hired Sam Altman, an extremely intelligent autonomous agent, to execute their vision of x-risk conscious AGI development for the benefit of all humanity but it turned out to be impossible to control him or ensure he’d stay durably aligned to those goals.

(*Spontaneous round of applause*)

2023: Sam Altman claims no financial motive for his OpenAI role.

— Ori Nagel ⏸️ (@ygrowthco) September 27, 2024

*Spontaneous round of applause* pic.twitter.com/LgvRjudgVd

This did not age well

Scoop: Sam Altman is planning to take equity in OpenAI for the first time.

It’s part of a corporate restructure which will also see the non-profit which currently governs OpenAI turn into a minority shareholder.

Reuters Article

Lol…but it’s truly weird…they all started together

For some reason this reminded me of :

This is the classic example from 1930 of Stalin and Nikolai Yezhov. The original photo was made in 1930. Yezhov was executed in 1940, so all photos of Stalin (he liked this one) after that airbrushed out Yezhov.

Moving goalposts is the ONE single unique thing

AI will never surpass humans at,

because the second it does, it will still not be enough!!!

If loving temporally organised oscillations in air pressure is not crazy, why is loving paperclips?

The paperclip maximiser story is demonstrating that saying someone is Super-Intelligent means Super-Capable. It says nothing about how Super-Insane that someone may be.

An intelligent entity can value anything.

“Smart AI would never want something as ridiculous as paperclips!” – exclaimed AI skeptic and went on to enjoy his favorite temporally organized oscillations in air pressure obtained by picking seven specific frequencies out of logarithmic split of twelve for each doubling.

© 2025 Lethal Intelligence – Ai. All rights reserved.

Your email will not be shared with anyone and won’t be used for any reason besides notifying you when we have important updates or new content